Pre-Summer Special Limited Time 70% Discount Offer - Ends in 0d 00h 00m 00s - Coupon code = getmirror

Pass the Microsoft Certified: Fabric Analytics Engineer Associate DP-600 Questions and answers with ExamsMirror

Exam DP-600 Premium Access

View all detail and faqs for the DP-600 exam

904 Students Passed

96% Average Score

92% Same Questions

You need to ensure that Contoso can use version control to meet the data analytics requirements and the general requirements. What should you do?

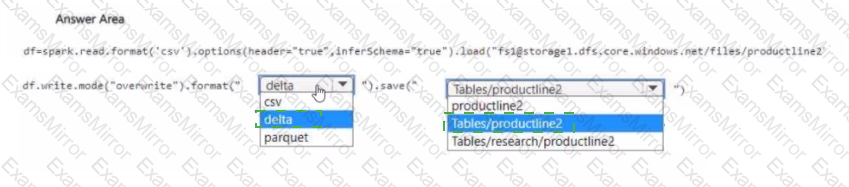

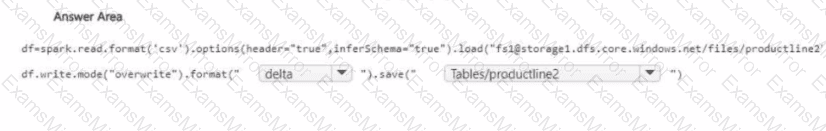

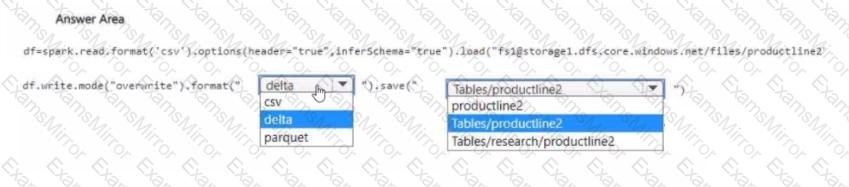

You need to migrate the Research division data for Productline2. The solution must meet the data preparation requirements. How should you complete the code? To answer, select the appropriate options in the answer area

NOTE: Each correct selection is worth one point.

You need to refresh the Orders table of the Online Sales department. The solution must meet the semantic model requirements. What should you include in the solution?

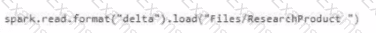

Which syntax should you use in a notebook to access the Research division data for Productlinel?

A)

B)

C)

D)

You need to recommend which type of fabric capacity SKU meets the data analytics requirements for the Research division. What should you recommend?

What should you use to implement calculation groups for the Research division semantic models?

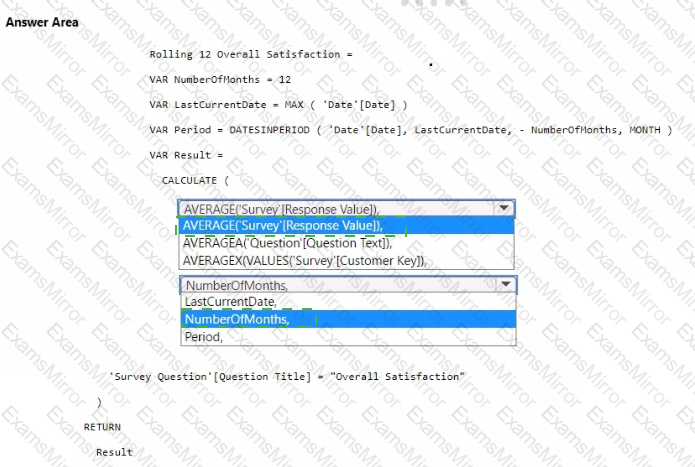

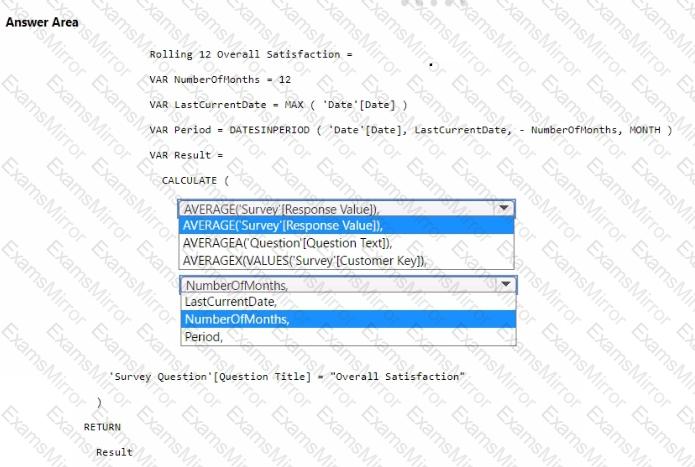

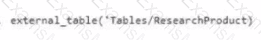

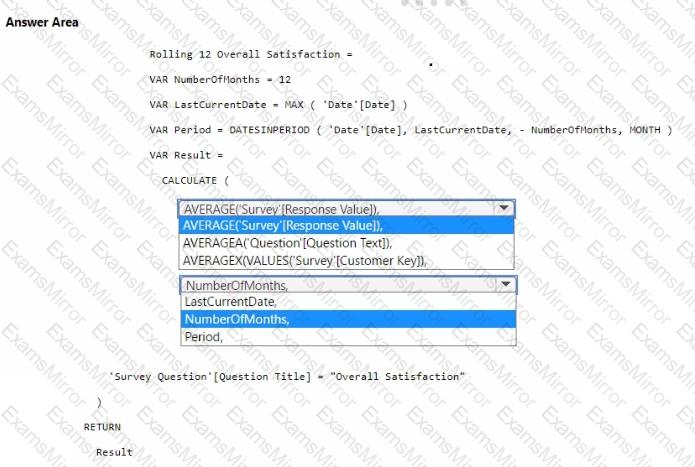

You need to create a DAX measure to calculate the average overall satisfaction score.

How should you complete the DAX code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Which type of data store should you recommend in the AnalyticsPOC workspace?

What should you recommend using to ingest the customer data into the data store in the AnatyticsPOC workspace?

You need to ensure the data loading activities in the AnalyticsPOC workspace are executed in the appropriate sequence. The solution must meet the technical requirements.

What should you do?

TOP CODES

Top selling exam codes in the certification world, popular, in demand and updated to help you pass on the first try.